Summarise this article with:

Last month we published an article that started with a single experiment. We searched “best Botox clinic in Southampton” across ChatGPT, Google AI Overview, and Perplexity, and the three platforms returned almost completely different lists of clinics. Same patient, same question, three different answers.

We argued that this had real implications for how UK aesthetics clinics should think about being found online, but we knew at the time that one search across one city wasn’t enough to support that argument properly.

So we did the work to find out if it held up.

We ran 54 more searches across nine UK locations and six treatments. We recorded every clinic that appeared, what their Google ranking was, whether they showed up in Google’s AI Overview, whether Perplexity recommended them, what their treatment pages looked like, what schema they used, and how many Google reviews they had.

That gave us precisely 314 clinic appearances to analyse. 164 unique clinics. Six treatments. Nine locations. Three AI platforms. One unified scoring system.

This is what the data showed. Some of it confirmed conventional wisdom. Most of it overturns it.

How We Did This

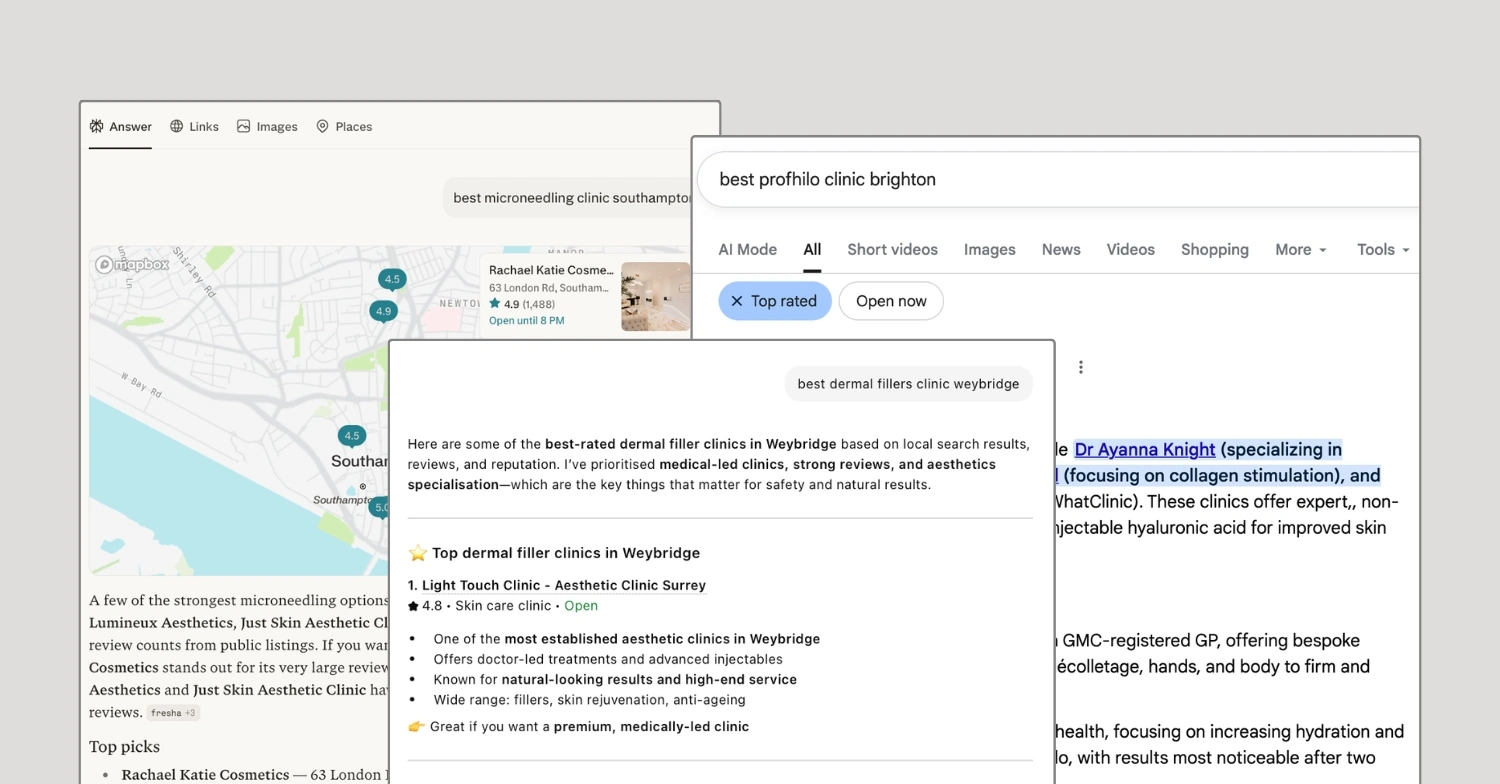

We picked nine UK locations that span major aesthetics markets across the UK: Southampton, Surrey, Weybridge, Brighton, Bristol, Manchester, London (Harley Street), Birmingham, and Edinburgh. For each city, we ran six treatment-specific searches: lip fillers, Botox/anti-wrinkle, Profhilo, dermal fillers, microneedling, and laser hair removal.

For each search, we used ChatGPT’s top 5 recommendations as our starting clinic list. We then independently checked each clinic’s Google organic ranking, whether they were cited in Google’s AI Overview (when one appeared), whether Perplexity recommended them, and we audited their treatment-page content (page depth, FAQ content, schema markup, Google review count, and rating).

Throughout this article, when we refer to “AI visibility”, we mean the percentage of available platforms where a clinic appeared for a given search. If a search returned results from all three platforms (ChatGPT, Google AIO, and Perplexity) and a clinic appeared on two of them, that clinic scored 67% on that search. If Google didn’t trigger an AI Overview at all, the search was scored out of two platforms instead of three. We averaged these scores per clinic to produce the visibility numbers cited throughout.

A Note On What This Study Can And Can’t Show

We need to be precise about the limitations before going any further, because some of what follows will be tempting to over-interpret.

This research is a snapshot. AI search results shift quickly, sometimes minute to minute, and we didn’t repeat searches over time to measure volatility (although we did notice some during our collection). The findings here describe what we observed during March 2026, in nine UK cities, across six treatments. They may not generalise to other countries, other industries, or other points in time.

Because we used ChatGPT’s top 5 as the starting list, the dataset is better at identifying patterns among shortlisted clinics than at proving platform-wide prevalence. The cross-platform agreement findings (or lack of agreement) are robust because they compare clinics that ChatGPT had already validated. The headline numbers about ChatGPT itself are not, because the methodology biases them upward by definition.

Within those bounds, this is one of the most detailed cross-platform audits of UK aesthetics clinic AI visibility we’ve seen published. The patterns it surfaces are observations from a specific dataset, not universal laws. We’ve tried to write throughout in a way that makes that distinction clear.

The Headline: AI Platforms Barely Agree With Each Other, Or With Google

This is the finding that should reshape how UK aesthetics clinics think about search.

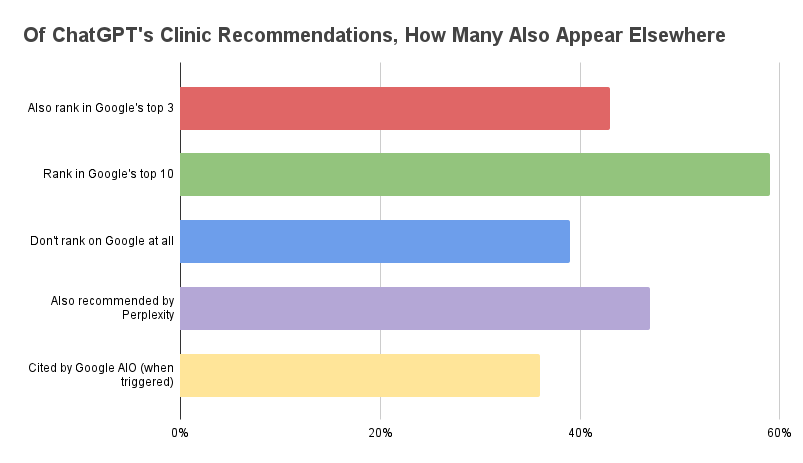

Of the 289 clinic recommendations ChatGPT gave us in our dataset, only 43% also rank in Google’s top 3 for the same search. 39% don’t rank on Google at all. Only 47% are also recommended by Perplexity. And when Google’s AI Overview did trigger, it cited ChatGPT’s picks just 36% of the time.

Three AI platforms, all answering the same patient questions, mostly disagreeing with each other.

Source: Sandbox Media UK Aesthetics Al Visibility Audit, March 2026 • 314 clinic appearances across 9 cities and 6 treatments

The disagreement gets sharper when you look at the top picks specifically. We isolated the 54 clinics ChatGPT named as its number one recommendation across all our searches. Of those, only 41% are also Google’s number one organic result. Five of ChatGPT’s number one picks don’t rank on Google at all.

The reverse is just as interesting. We found 11 clinics ranking number one on Google for their treatment search that ChatGPT didn’t recommend at all. Some surprising names sit on this list: sk:n Clinics (multiple cities) and 111 Harley Street. These are clinics that have done everything traditional SEO tells them to do, and ChatGPT still ignored them in our test.

If you’re still optimising only for Google, you’re competing in one channel out of three, and patients are increasingly using all three.

Five Things We Expected To Matter (That Didn’t)

Before this audit, we had assumptions. Most of them came from what the SEO/GEO industry has been telling aesthetics clinics for the last two years. The data made us rethink most of them…

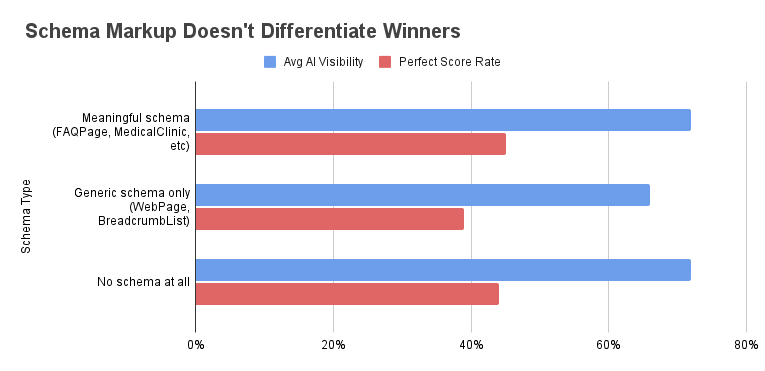

1. Schema markup didn’t differentiate winners in our sample

This is the finding most likely to attract pushback, so we want to be precise about what we mean.

We checked 240 clinic pages for schema markup. We split them into three groups: clinics with meaningful schema (FAQPage, MedicalClinic, MedicalBusiness, LocalBusiness, Service, Product), clinics with only generic schema (WebPage, BreadcrumbList, Organization, the kind of thing a CMS adds automatically), and clinics with no schema at all.

Source: Sandbox Media UK Aesthetics AI Visibility Audit, March 2026

Within our dataset, clinics with meaningful schema averaged 72% AI visibility. Clinics with no schema at all averaged 72% AI visibility. The numbers were, notably, identical.

Clinics with only generic schema actually performed slightly worse, at 66%, but this is almost certainly correlation rather than causation. The kind of clinic that has only generic schema also tends to have a thin, off-the-shelf WordPress site with little real content. The schema is a symptom, not a cause.

We isolated FAQPage schema specifically. 70% AI visibility for clinics with it. 70% for clinics without it. Also zero meaningful difference.

What this tells us: in our sample, schema markup didn’t compensate for thin pages, and visible FAQ content (real questions and answers in the page body) outperformed FAQ markup as a predictor of visibility. We are not arguing schema is worthless in every context. It may help with discovery, disambiguation, or platform eligibility in ways our audit couldn’t isolate. But the SEO industry has been selling FAQ schema as a primary lever for AI visibility, and the data we collected doesn’t support that framing.

If you have FAQ content on a strong page, wrapping it in FAQPage schema is fine and still worth doing. If your page is thin, schema won’t save you.

2. Five-star perfection isn’t a deciding factor

Star rating mattered far less than most people think.

The clinics in our high-visibility group averaged a 4.90 Google rating. The clinics in our low-visibility group averaged 4.82. That’s a 0.08 gap, which is essentially noise once you control for sample size.

The only place where rating clearly matters is at the bottom of the scale. Clinics rated under 4.5 stars averaged 45% visibility versus 73% for everyone above. So if your rating is below 4.5, fix it. But if you’re already at 4.7 or 4.8, chasing 5.0 isn’t going to move your AI visibility much.

3. Google ranking is an input, not an outcome

Google rank does correlate with AI visibility. Clinics ranking in Google’s top 3 averaged 76% AI visibility. Unranked clinics averaged 59%. There’s a real gap.

But the gap is much smaller than people assume, and 117 clinics with no Google ranking whatsoever still appeared in AI results. 30 clinics with zero Google presence scored 100% on AI platforms (more on this below).

That last number is worth pausing on. 30 clinics in our dataset have built AI search visibility without traditional SEO authority. The new GEO playbook is not completely the same as the old SEO playbook. There’s overlap, of course, but the parts that don’t overlap are where the visibility is actually being won and lost.

4. ChatGPT visibility doesn’t transfer to other platforms

We started this audit assuming that if ChatGPT recommends you, you’re probably doing well across the AI search landscape. The data killed that assumption.

53% of ChatGPT’s recommendations don’t appear in Perplexity. 64% of ChatGPT’s recommendations are not cited by Google AI Overviews when it triggers. The platforms appear to use different signals.

The most divergent platform is Perplexity. Across every city, Perplexity disagreed with ChatGPT more often than it agreed. The disagreement was sharpest in Harley Street (only 22% overlap) and Surrey (29%).

If you’re optimising for “AI search” as a single thing, you may be missing the point. You’re optimising for at least three different systems with at least three different recommendation logics.

5. Site-wide schema sets don’t compensate for thin treatment pages

Several clinics in our audit had perfectly implemented site-wide schema (Organization, LocalBusiness, the lot), but treatment pages with 200 words of generic copy. They consistently underperformed.

Schema is a way of describing what’s already on the page. If the page is thin, the markup can’t rescue it. AI platforms are reading the actual content.

Three Things That Actually Predict AI Visibility

After throwing out what didn’t work, here’s what the data shows clearly does…

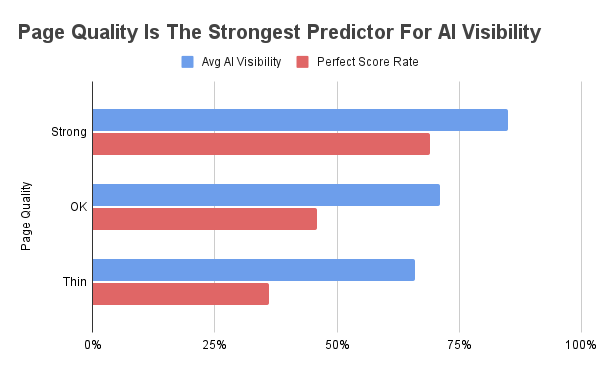

1. Page quality is the strongest single signal

Of all the variables we tracked, the depth and quality of the treatment page itself were the most reliable predictors of AI visibility.

Source: Sandbox Media UK Aesthetics AI Visibility Audit, March 2026

Clinics with what we graded as “Strong” treatment pages averaged 85% AI visibility. 69% of them scored a perfect 100% across all available platforms. Not a single Strong-page clinic in our dataset was completely invisible.

Clinics with “Thin” treatment pages averaged 66% visibility. Only 36% scored a perfect 100%. And 65% of our lowest-visibility clinics had thin treatment pages.

A clinic with a Strong treatment page was roughly nine times more likely to appear in our high-visibility group than our low-visibility group.

What “Strong” actually meant in our audits

We graded pages as Strong, OK, or Thin based on a consistent set of criteria. A Strong treatment page in our dataset typically had:

- 800+ words of original, treatment-specific content (not boilerplate that could apply to any treatment)

- A clear introduction explaining what the treatment is and who it’s for

- Sections covering: what the treatment treats, what to expect during the procedure, downtime and aftercare, realistic outcomes, risks and side effects, pricing or pricing context

- Real FAQ content addressing the questions patients actually ask (not generic ones bolted onto the bottom)

- Logical structure with proper headings (H2, H3) that an AI can use to extract specific answers

- At least one visual element (before/after, treatment photo, video, or diagram)

- Links to related treatments and the booking flow

One pattern we noticed but didn’t formally measure: most of the Strong pages in our dataset displayed pricing or pricing ranges directly on the treatment page. Many of the Thin pages did not, requiring patients to enquire to find out costs.

We didn’t isolate pricing as a variable in this audit, but it’s reasonable to expect that pages answering ‘how much does this cost’ upfront give AI platforms more useful structured information to extract. Worth noting that ASA rules around price advertising in aesthetics are strict, particularly for prescription-only treatments, so pricing should always be presented in a compliant way.

Thin pages, by contrast, were typically under 300 words, treated the page as a sales billboard rather than a patient resource, and skipped most of the consultation-style detail entirely. They often read like a price list with a CTA bolted on.

A useful test: read your treatment page as if you were a patient researching the treatment for the first time. If, after reading it, you would still need to call the clinic to understand what the treatment actually does and what to expect, the page is too thin. If the page answers your questions in advance, it’s probably in the right place.

That structure isn’t accidental. It maps directly to how AI platforms parse and summarise content. The platforms are looking for pages they can confidently extract specific answers from.

A page that already answers the questions a patient might ask gives the AI everything it needs.

2. Review volume matters, but it plateaus

We expected reviews to matter. We didn’t expect the relationship to be as clean as it was.

Clinics with under 50 reviews averaged 62% visibility. 50 to 99 reviews: 67%. 100 to 249 reviews: 73%. 250 to 499 reviews: 75%, the peak. Above 500 reviews, the average actually dropped back to 68%, probably because the 500+ bucket is dominated by national chains (sk:n, Therapie, Laser Clinics UK) that have the review volume but lack the deep, localised page content.

The sweet spot looks like 100 to 500 reviews. The threshold effect at 100 is particularly clear in the data. Below 100 reviews, AI visibility struggles. Above 100, it improves significantly.

This matches what we’d expect AI platforms to do. They can’t easily verify the quality of a clinic, but review volume is a clean trust signal. A clinic with 8 reviews is harder to confidently recommend than a clinic with 280, regardless of how good either one actually is.

What this suggests in practice: if your clinic has fewer than 100 Google reviews, building a review acquisition system is one of the higher-leverage things you can do for AI visibility. We’d still prioritise fixing thin treatment pages first, because page quality showed a stronger correlation in our data, but reviews are a close second.

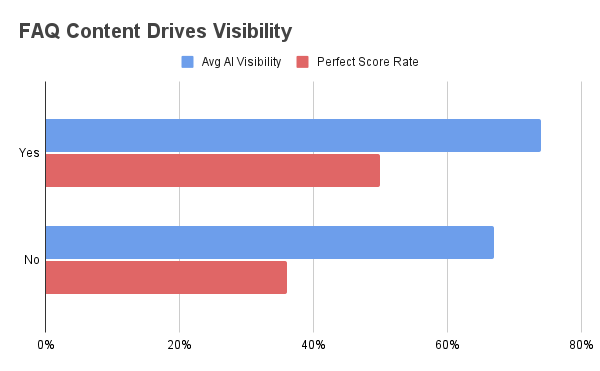

3. FAQ content (not schema) drives visibility

This one is subtle and important.

We tracked two separate things: whether a treatment page had visible FAQ content (real questions and answers in the page body), and whether it had FAQPage schema markup wrapping that content.

Source: Sandbox Media UK Aesthetics AI Visibility Audit, March 2026

FAQPage schema by itself: zero correlation with AI visibility (70% with vs 70% without).

FAQ content by itself: meaningful correlation. Pages with FAQ content averaged 74% visibility versus 67% without. 50% of FAQ pages scored a perfect 100% versus 36% of non-FAQ pages.

When AI Overviews triggered, the difference was even sharper. Pages with FAQ content were cited 62% of the time. Pages without were cited 20% of the time.

The lesson: AI platforms are reading the words on the page. They reward pages that already answer patient questions in plain language. Wrapping those questions in FAQPage schema is fine, and we’d recommend it as a small addition, but it’s the answers themselves that drive visibility.

The Combined Recipe

Looking at all the variables together, a clear pattern emerged. The clinics that consistently won across all three AI platforms shared a specific combination of traits: substantive treatment-page content, real FAQ-style answers within that content, and meaningful review volume above the 100 threshold.

We found this combination in 23% of our high-visibility clinics. We found it in just 3% of our low-visibility clinics. That combination is roughly eight times more common in winners than in losers.

A few clinics in our dataset hit every signal:

Quinn Clinics (Bristol) scored 100% across five treatment searches. 286 Google reviews at 4.9 stars. Strong treatment-page content. Ranks number 2 organically.

The Academy Clinic (Manchester) scored 93% across five treatment searches. Strong treatment-page content and consistent visibility across ChatGPT and Perplexity, despite Manchester being one of the cities where Google’s AI Overview did not trigger during our test.

Lumineux Aesthetics (Southampton) scored 93% across five treatment searches. 117 Google reviews at 5.0 stars. Strong treatment pages with structured FAQ content. Notably, Lumineux ranks 11th organically on Google for lip fillers in Southampton, but appears in the top 3 of both ChatGPT and Perplexity. This is exactly the pattern that defines the new playbook.

Light Touch Clinic (Weybridge) appeared in every single ChatGPT search we ran across both Surrey and Weybridge. 12 out of 12 appearances. Strong treatment pages, 214 Google reviews at 4.8 stars, FAQ content throughout. The clinic also illustrates a regional point: in Weybridge it scored a perfect 100% on every available platform. In Surrey, the same clinic struggled with Perplexity (0/6 visibility), suggesting the broader geographic search term dilutes its visibility.

These are not the biggest clinic chains in the UK. They are not the most expensive. They are the clinics whose treatment pages give AI systems the most to work with.

One question this naturally raises is what these winning clinics have in common at the platform level. We’ve classified the underlying tech stack of every clinic in this dataset and published the platform-layer follow-up. 60% are on WordPress, Wix sits at the bottom of every metric, and the headless paradox (modern infrastructure, thin content) is its own category.

Disclosure: Lumineux Aesthetics and Light Touch Clinic are Sandbox Media clients. We’ve named them because their performance in the dataset is genuinely notable, and the audit method was applied to them on the same basis as every other clinic. The findings would have been the same regardless. Just Skin and Quinn Clinics are not Sandbox clients and we have no commercial relationship with either.

The 30 Clinics Winning Without Google

One of the more striking patterns in the dataset deserves its own section.

Of the 164 clinics in our audit, 30 scored 100% on AI platforms despite having no Google organic ranking at all for their treatment search. Not the bottom of page one. No traditional ranking presence whatsoever.

These clinics include British Cosmetic Clinic in Bristol (perfect AI score, no Google ranking), The MediClinic in Brighton (perfect AI visibility across multiple treatments, also unranked), and Just Skin in Southampton (perfect AI visibility, no organic ranking).

They are not outliers in some niche treatment. They are clinics that have, by some combination of content, reviews, and brand presence, made themselves visible to AI without playing the traditional SEO game.

What do they have in common? Looking across the 30, the pattern that stood out was that almost all of them had substantial Google review volume (typically 100+ reviews at 4.7+ stars), clear treatment-specific pages (even where the pages weren’t always Strong by our criteria), and a clean, recognisable brand presence with consistent NAP (name, address, phone) information across the web.

We could not isolate exactly why each clinic won AI visibility without Google. The dataset doesn’t capture every possible signal (citations on directory sites, Instagram presence, mentions in editorial content, the language used in their reviews). What we can say with confidence: traditional Google ranking is not a prerequisite for AI visibility. The new playbook genuinely is different.

For clinic owners, this is the finding that probably matters most. It means an existing investment in Google rankings is not wasted (the correlations show it still helps), but it also means a clinic that has been losing on traditional SEO has a real path to visibility through other signals. AI search is not just SEO with extra steps. It is a different system that rewards different things.

Different Treatments, Different Rules

One of the most useful findings, and one most aesthetics agencies aren’t talking about, is that AI search behaves completely differently depending on the treatment.

For lip fillers, only 36% of ChatGPT’s picks rank in Google’s top 3. 49% don’t rank on Google at all. Lip fillers are the most “AI-native” treatment in our dataset, where the gap between AI recommendations and traditional SEO is widest.

For laser hair removal, the picture is the opposite. 53% overlap with Google’s top 3, the highest of any treatment. Laser hair removal is the most “SEO-aligned” treatment, dominated by chains (Therapie, Laser Clinics UK, sk:n) that have built strong traditional rankings.

For Profhilo, Google’s AI Overview triggered 58% of the time. For lip fillers, Botox, and dermal fillers, AI Overview triggered just 11 to 13% of the time. Profhilo is the treatment where Google has decided patients want explanations rather than local listings.

What this means: a single GEO strategy applied across all your treatment pages is leaving visibility on the table. Each treatment has its own AI search profile, and the optimisation playbook differs depending on whether Google is serving an AI Overview or routing to the local pack.

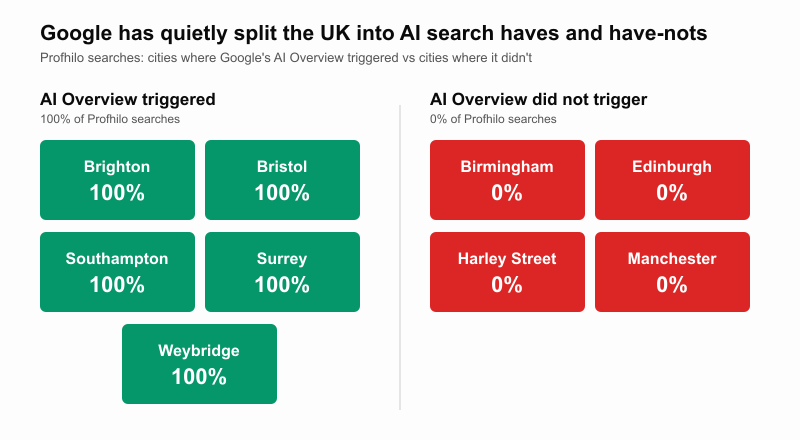

The Regional Reality: Google’s Quiet Geographic Split

This was the biggest surprise in the dataset, and it’s a finding nobody else seems to have published.

Source: Sandbox Media UK Aesthetics AI Visibility Audit, March 2026

Google’s AI Overview availability for aesthetic treatments was not consistent across the UK during our test window. It was regionally split. In some markets, it triggered reliably. In others, it didn’t trigger at all.

Looking at Profhilo specifically (the treatment most likely to trigger AIOs), the pattern was binary in our dataset:

AI Overview triggered 100% of the time in Brighton, Bristol, Southampton, Surrey, and Weybridge.

AI Overview triggered 0% of the time in Birmingham, Edinburgh, London (Harley Street), and Manchester.

There was no city in our test where the trigger was sometimes yes, sometimes no. The split appears to reflect a structural decision by Google about which markets get AI Overviews on aesthetics queries, though we want to be careful about the cause.

Possible explanations include Google’s YMYL (Your Money or Your Life) classification, which applies extra scrutiny to medical and health-related content; staged regional rollouts of AIO functionality; differences in local pack quality that influence whether Google substitutes AIO for traditional results; or account variation we couldn’t fully control for. The pattern itself is what we observed; the cause is harder to pin down without access to Google’s internal logic.

What we can say with confidence: clinics on the wrong side of this split are competing in a different search environment than clinics on the right side. Before optimising for AI Overviews, find out whether they even trigger in your market for your specific treatments. The clinics in Birmingham, Edinburgh, Harley Street, and Manchester aren’t losing the AI Overview battle in our data. They aren’t being given a battle to fight at all.

City-level visibility breakdown (averaged across all platforms and treatments):

- Bristol: 77%

- Weybridge: 77%

- Edinburgh: 74%

- Southampton: 72%

- Birmingham: 71%

- Manchester: 67%

- Brighton: 64%

- London (Harley Street): 61%

- Surrey: 56%

Two of these numbers should make every aesthetics clinic owner pause.

The most prestigious aesthetics market in the UK, Harley Street, has the second-worst AI visibility of any city we tested. Perplexity agreed with ChatGPT just 22% of the time on Harley Street recommendations. Patients searching for premium aesthetics in central London are getting the least consistent AI guidance of anywhere in the country.

The Findability Problem

A theme that emerged throughout our audit, and one harder to capture in the numbers, is the issue of page findability.

Some clinics had decent treatment-page content, but it was effectively hidden. We came across pages buried three or four levels deep in the navigation, treatments that didn’t have their own page at all (lumped together with other services), pages with no internal links pointing to them, and pages that we could only find using a site: search.

If a clinic’s treatment page is hard to find, AI platforms cannot use it as a citation source, regardless of how good the content is. The page needs to be a properly linked, properly titled, properly indexed asset.

A useful test: if you can’t navigate to your treatment page in three clicks from your homepage, your AI visibility is being capped by site structure, not content quality.

What This Means For Your Clinic

Pulling all of this together, here is what the data suggests about where to invest your time and budget if you want to win on AI search. We’ve ordered these by the strength of the signal in our dataset, which is also the order we’d recommend tackling them.

1. Treat your treatment pages as the primary asset

This is the single highest-leverage thing in the data. The treatment page is what AI platforms read when they decide whether to cite you. It is also, for most clinics we audited, the weakest part of their digital presence.

Audit each of your treatment pages against the Strong-page criteria above. Be honest. A page with 250 words, a price, and a “Book Now” button is not enough. The page needs to function as a comprehensive patient resource: what the treatment is, who it’s for, what to expect during the procedure, what happens afterwards, what results look like, what the risks are, and how the clinic specifically delivers it.

Two practical points clinic owners often miss:

The page should be unique to the treatment. If your “Lip Fillers” page reads almost identically to your “Dermal Fillers” page with the words swapped out, AI platforms will struggle to differentiate them and you’ll lose visibility on both. Each treatment deserves its own consultation-grade content.

The page should sound like your clinic, not like a Wikipedia entry. AI platforms aren’t just looking for completeness; they’re looking for content that has a clear source identity. Pages that read like generic medical information get treated as generic medical information.

2. Build a Google review acquisition system

If you have under 100 reviews, this is your second priority. The threshold effect in our data was clear: visibility improves significantly once you cross 100. Below that, you’re capped regardless of how good your content is.

The system doesn’t need to be complicated. It needs to be consistent. Most clinics already ask for reviews informally and get them sporadically. A system means every patient is asked, at the right moment in their journey, through a channel that makes leaving a review take less than 90 seconds. The clinics in our dataset above the 100-review threshold almost all had some version of this, whether they thought of it formally or not.

A note on rating: don’t obsess about reaching 5.0. Once you’re above 4.7, the rating itself is largely irrelevant in our data. Volume is what moves the needle.

3. Add real FAQ content to every treatment page

Not FAQ schema (which our data shows is essentially neutral), but real, useful FAQs written into the page. The questions should be the actual questions patients ask in consultations, not the generic ones that come pre-loaded with most CMS templates.

A useful exercise: ask your front-of-house team to list the questions they get asked most often about each treatment. Those are your FAQ questions. Answer them properly, in plain language, in the page body. If you also want to wrap them in FAQPage schema, that’s fine.

The reason this works is mechanical. AI platforms are looking for explicit question-answer pairs they can extract and quote. A treatment page that already contains those pairs is much easier to cite than a page that buries the same information in marketing prose.

4. Audit your site structure for findability

This one costs nothing but is genuinely under-prioritised. Open your homepage and try to navigate to each of your treatment pages in three clicks. If you can’t, fix it. AI platforms (and patients) cannot use what they cannot find.

Common issues we saw repeatedly: treatments missing from the main navigation, treatments lumped together on a single “Injectables” page, treatments mentioned in blog posts but with no dedicated landing page, and treatment URLs so deep they require breadcrumbs to escape.

Every treatment you offer should have its own page, linked from your main navigation, indexed by Google, and titled in a way a patient would actually search for. If those four things aren’t true, fix them before you do anything else.

5. Map your AI Overview availability

Before optimising for AI Overviews specifically, find out whether they even trigger in your market for your treatments. Run your top three treatment searches in Google (city + treatment name). If you see an AI Overview, you’re in a market where AIO matters and is worth optimising for. If you see only the local pack, AIO is not currently a viable channel in your market and your strategy should focus on ChatGPT and Perplexity instead.

This will likely change as Google rolls AIO out more broadly, but right now, applying the same AIO strategy in Birmingham as you would in Brighton is wasted effort.

6. Treat the three platforms as three different optimisation targets

ChatGPT, Google AIO, and Perplexity are not interchangeable. They use different signals, weight them differently, and produce different recommendations from the same underlying inputs.

In practice, this means treating each platform as a separate channel:

For ChatGPT, the highest-leverage actions in our data were strong content and a clean, well-structured site that’s easy for the model to interpret.

For Google AIO, the strongest signals were FAQ content, classic SEO authority (you still need to rank well to be cited as a source), and meaningful schema where appropriate.

For Perplexity, the patterns were less clear in our dataset, but the clinics that won on Perplexity tended to have strong third-party citations: mentions in editorial content, directory listings, and external sources that Perplexity’s citation logic seems to weight heavily.

You don’t need three separate strategies. You need one strategy that recognises the three platforms have different priorities and covers all of them.

A Final Note

When we ran our first audit last month, the conclusion was that AI search results for UK aesthetics were chaotic and inconsistent. After 314 clinic appearances of analysis, we’d refine that. The results aren’t chaotic. They’re following different rules than traditional search, and most clinics haven’t realised the rules have changed.

The clinics that win in this new environment are not always the clinics that win on Google. They are the clinics whose treatment pages are easiest for AI to trust, summarise, and recommend.

Not just the easiest to crawl. Not just the easiest to rank. The easiest to recommend.

That is the real shift this audit shows.

We’ve extended this research into the platform layer in a follow-up piece. If you’re curious about what 60% of UK aesthetic clinics run on, why Wix is quietly losing, and what happens when AI builders arrive, read the platform-layer audit here.

Want to See Where You Stand?

We’ve been running free AI visibility audits for selected UK aesthetics clinics as part of this research. Following the response to our first article, we’re opening a second round.

If you’d like us to run the same audit on your clinic across ChatGPT, Perplexity, and Google AI Overview, plus a treatment-page audit using the same methodology described in this piece, send us a message. We’ll send you a written report showing exactly where you appear (and don’t), what the gaps are, and the highest-leverage actions to fix them.

No sales pitch, no obligation. We’re using these audits to expand our dataset for the next piece of research, and we think the findings will be useful to you whether or not you decide to work with us.

This research was conducted by Sandbox Media in March 2026. The full anonymised dataset and methodology are available on request.

Zach Havard is the founder of Sandbox Media, a digital growth agency specialising in SEO, Generative Engine Optimisation (GEO), and AI-powered growth systems for UK aesthetics clinics.